There seems to be some confusion here on what PTX is – it does not bypass the CUDA platform at all. Nor does this diminish NVIDIA’s monopoly here. CUDA is a programming environment for NVIDIA GPUs, but many say CUDA to mean the C/C++ extension in CUDA (CUDA can be thought of as a C/C++ dialect here.) PTX is NVIDIA specific, and sits at a similar level as LLVM’s IR. If anything, DeepSeek is more dependent on NVIDIA than everyone else, since PTX is tightly dependent on their specific GPUs. Things like ZLUDA (effort to run CUDA code on AMD GPUs) won’t work. This is not a feel good story here.

I don’t think anyone is saying CUDA as in the platform, but as in the API for higher level languages like C and C++.

PTX is a close-to-metal ISA that exposes the GPU as a data-parallel computing device and, therefore, allows fine-grained optimizations, such as register allocation and thread/warp-level adjustments, something that CUDA C/C++ and other languages cannot enable.

Some commenters on this post are clearly not aware of PTX being a part of the CUDA environment. If you know this, you aren’t who I’m trying to inform.

aah I see them now

I thought CUDA was NVIDIA-specific too, for a general version you had to use OpenACC or sth.

CUDA is NVIDIA proprietary, but may be open to licensing it? I think?

I think the thing that Jensen is getting at is that CUDA is merely a set of APIs. Other hardware manufacturers can re-implement the CUDA APIs if they really wanted to (especially since AFAIK, Google v Oracle ruled that APIs cannot be copyrighted). In fact, AMD’s HIP implements many of the same APIs as CUDA, and they ship a tool (HIPIFY) to convert code written for CUDA for HIP instead.

Of course, this does not guarantee that code originally written for CUDA is going to perform well on other accelerators, since it likely was implemented with NVIDIA’s compute model in mind.

What is amazing in this case is that they achieved spending a fraction of the inference cost that OpenAI is paying.

Plus they are a lot cheaper too. But I am pretty sure that the American government will ban them in no time, citing national security concerns, etc.

Nevertheless, I think we need more open source models.

Not to mention that NVIDIA also needs to be brought to earth.

Even if they get banned, any startup could replicate their work if it is truly open source. The best thing about their solution is that it breaks the CUDA monopoly that NVDA has enjoyed. Buy your puts when NVDA bounces because that stock is GOING DOWN. There’s no world where a company that makes GPU’s is worth more than both Apple and Microsoft. It’s inevitable.

Never forget kids the market can stay irrational much longer than you can stay solvent.

True. Thats why I tend to make small plays instead of being an absolute degenerate gambler.

It’s written in nvidia instruction set PTX which is part of CUDA ecosystem.

Hardly going to affect nvidia

I wish that was true, but this doesn’t threaten any monopoly

It certainly does.Until last week, you absolutely NEEDED an NVidia GPU equipped with CUDA to run all AI models.Today, that is simply not true. (watch the video at the end of this comment)I watched this video and my initial reaction to this news was validated and then some: this video made me even more bearish on NVDA.Edit: corrected and redacted.

mate, that means they are using PTX directly. If anything, they are more dependent to NVIDIA and the CUDA platform than anyone else.

to simplify: they are bypassing the CUDA API, not the NVIDIA instruction set architecture and not CUDA as a platform.

Ahh. Thanks for this insight.

Until last week, you absolutely NEEDED an NVidia GPU equipped with CUDA to run all AI models.

Thanks for the corrections.

The big win I see here is the amount of optimisation they achieved by moving from the high-level CUDA to lower-level PTX. This suggests that developing these models going forward can be made a lot more energy-efficient, something I hope can be extended to their execution as well. As it stands currently, “AI” (read: LLMs and image generation models) consumes way too many resources to be sustainable.

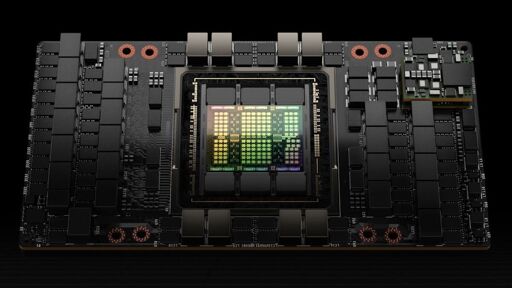

What I’m curious to see is how well these types of modifications scale with compute. DeepSeek is restricted to H800s instead of H100s or H200. These are gimped cards to get around export controls, and accordingly they have lower memory bandwidth (~2 vs ~3 TB/s) and most notably, much slower GPU to GPU communication (something like 400 GB/s vs 900 GB/s). The specific reason they used PTX in this application was to help alleviate some of the bottlenecks due to the limited inter-GPU bandwidth, so I wonder if that would still improve performance on H100 and H200 GPUs where bandwidth is much higher.

PTX also removes NVIDIA lock-in.

Kind of the opposite actually. PTX is in essence nvidia specific assembly. Just like how arm or x86_64 assembly are tied to arm and x86_64.

At least with cuda there are efforts like zluda. Cuda is more like objective-c was on the mac. Basicly tied to platform but at least you could write a compiler for another target in theory.

IIRC Zluda does support compiling PTX. My understanding is that this is part of why Intel and AMD eventually didn’t want to support it - it’s not a great idea to tie yourself to someone else’s architecture you have no control or license to.

OTOH, CUDA itself is just a set of APIs and their implementations on NVIDIA GPUs. Other companies can re-implement them. AMD has already done this with HIP.

Ah, I hoped it was cross platform, more like Opencl. Thinking about it, a lower level language would be more platform specific.

Wtf, this is literally the opposite of true. PTX is nvidia only.

Google was giving me bad search results about PTX so I just posted am opinion and hoped Cunningham’s Law would work.

How cunning.

Yeah I’d like to see size comparisons too. The cuda stack is massive.

Reminds me of the Bitcoin mining and how askii miners overtook graphic card mining practically overnight. It would not surprise me if this goes the same way.

It’s already happening. This article takes a long look at many of the rising threats to nvidia. Some highlights:

-

Google has been running on their own homemade TPUs (tensor processing units) for years, and say they on the 6th generation of those.

-

Some AI researchers are building an entirely AMD based stack from scratch, essentially writing their own drivers and utilities to make it happen.

-

Cerebras.ai is creating their own AI chips using a unique whole-die system. They make an AI chip the size of entire silicon wafer (30cm square) with 900,000 micro-cores.

So yeah, it’s not just “China AI bad” but that the entire market is catching up and innovating around nvidia’s monopoly.

-

They said this is close to metal. Wake me up when they’ve achieved metal.

I thought everyone liked to hate on Metal.

This is why Nvidia stock has been hit so hard. CUDA is their moat

Aw, CUDA see this happening…